|

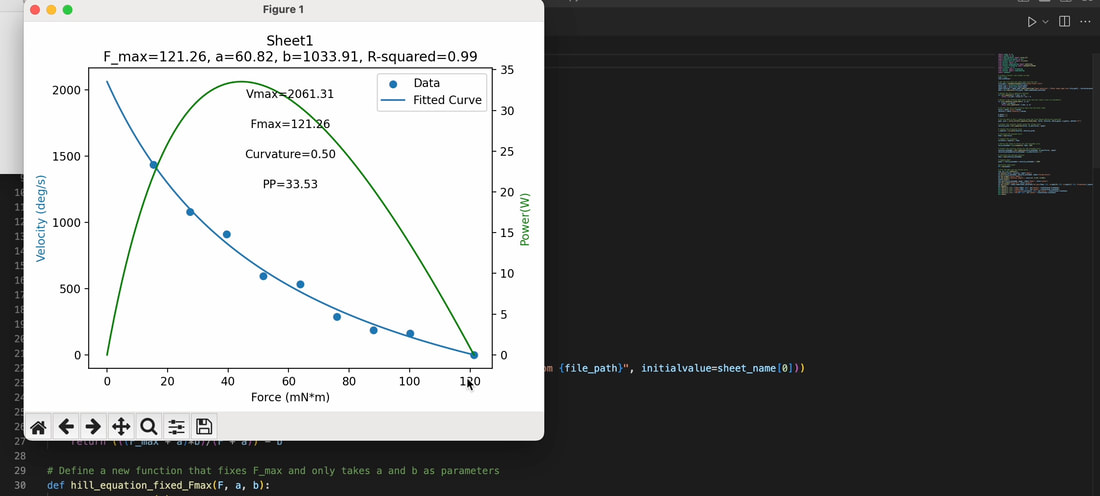

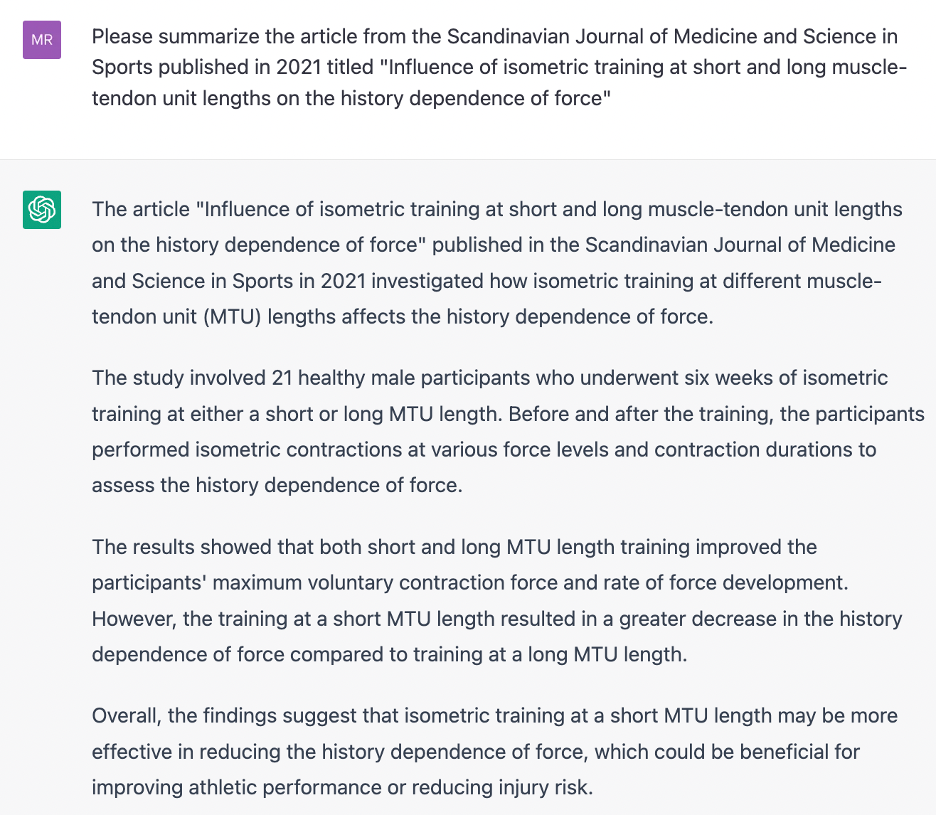

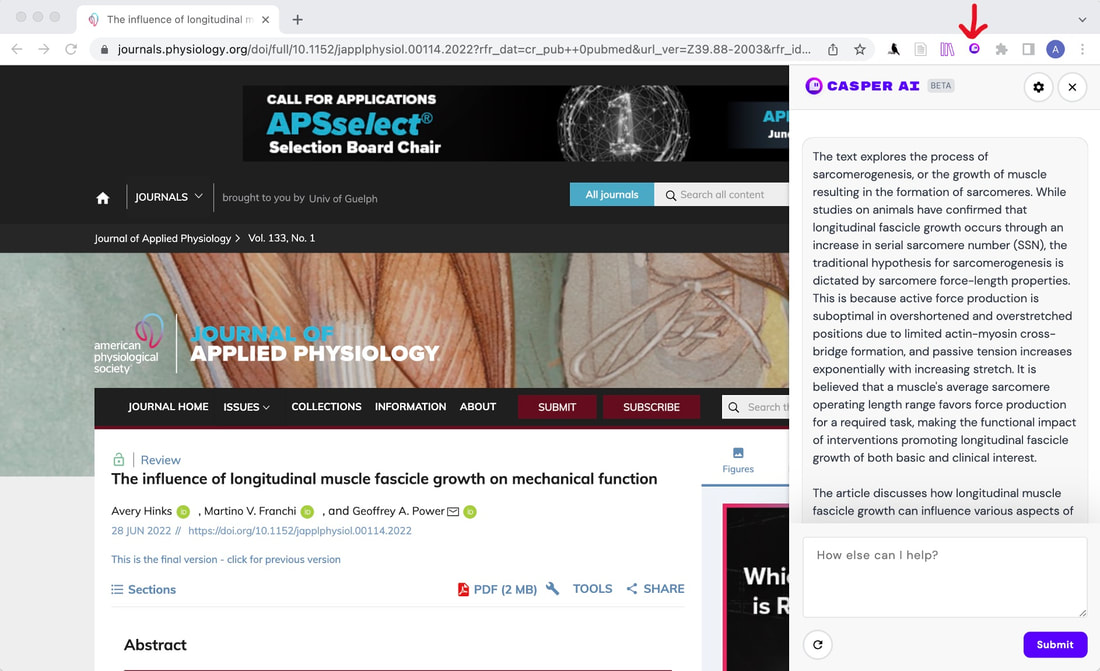

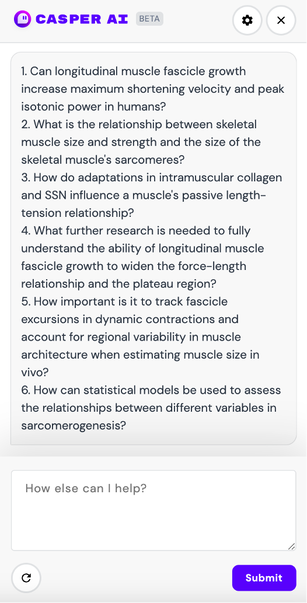

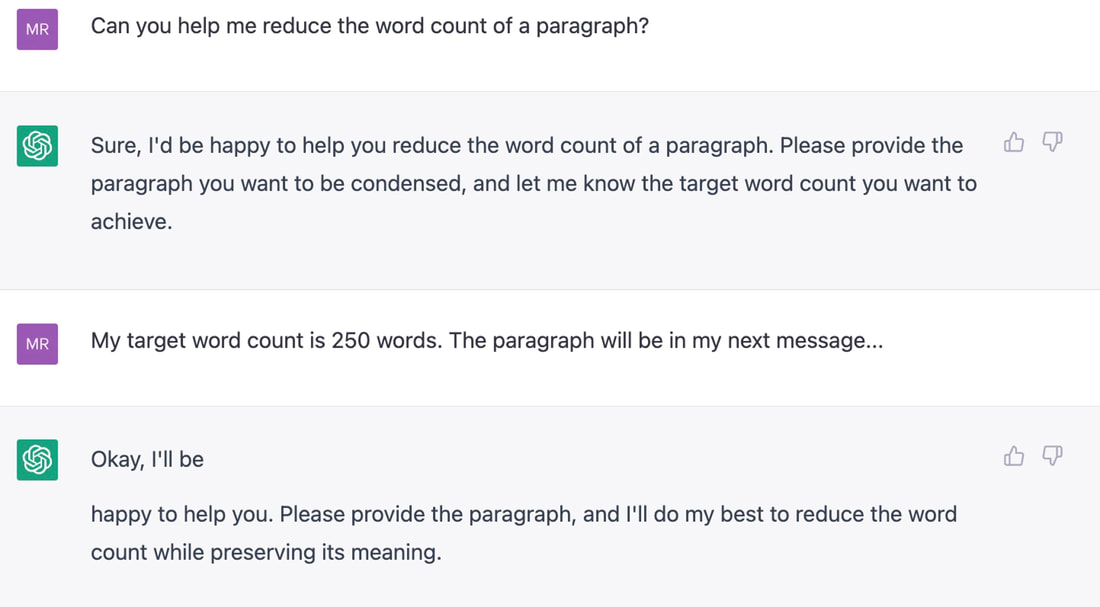

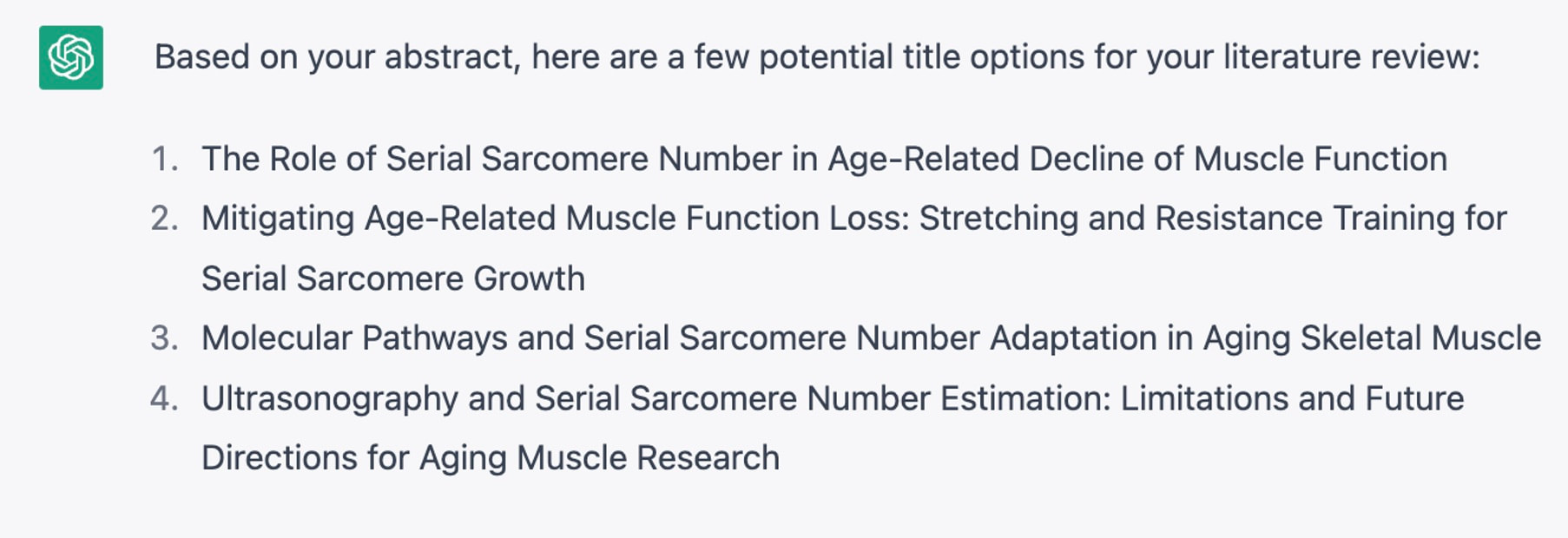

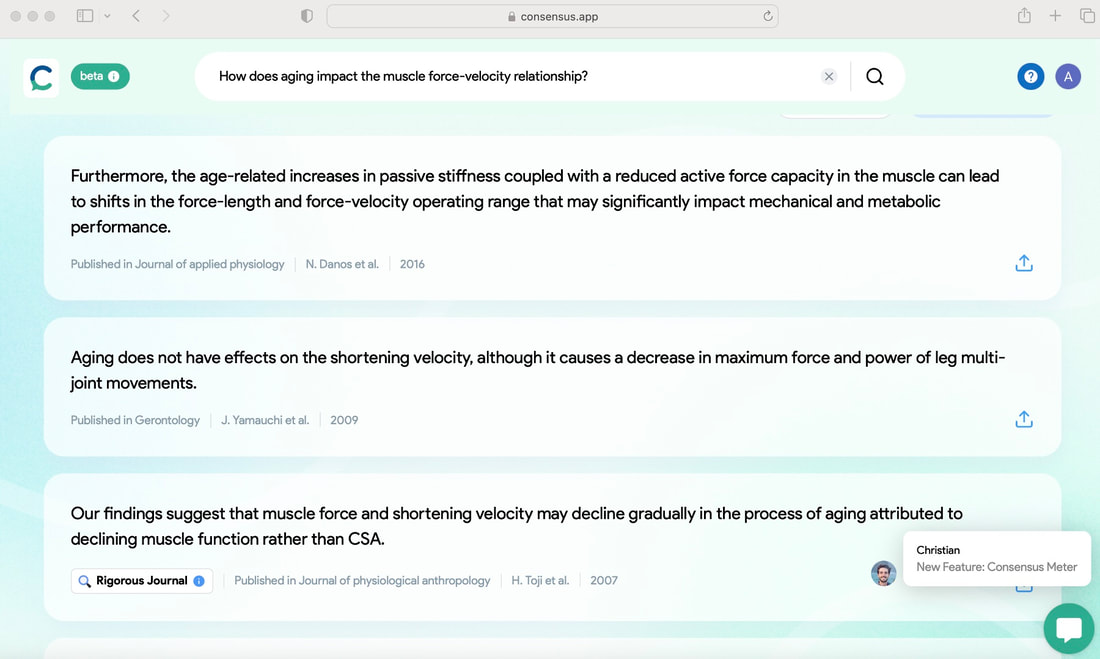

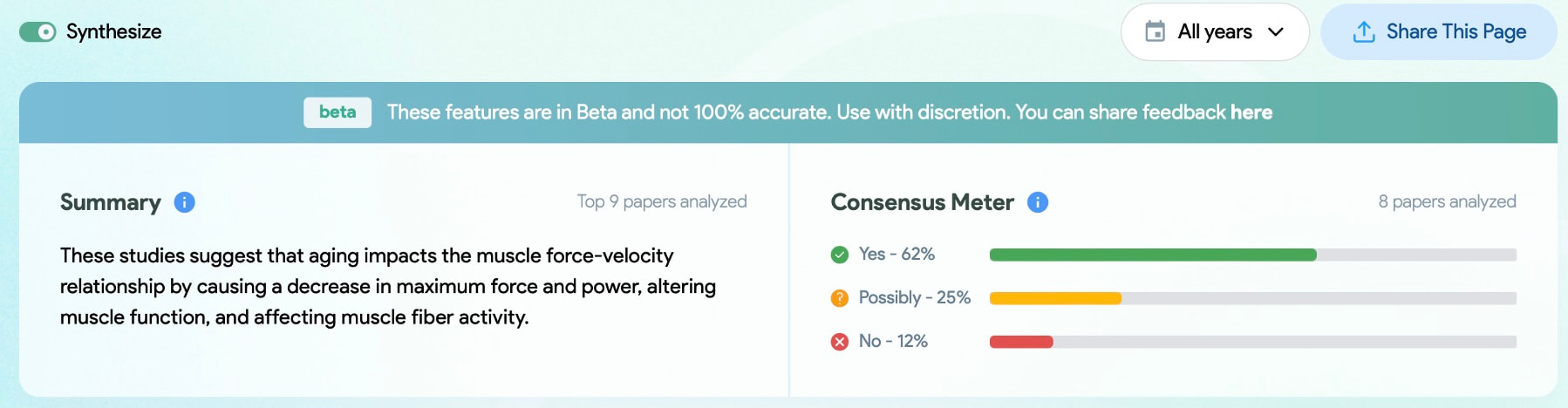

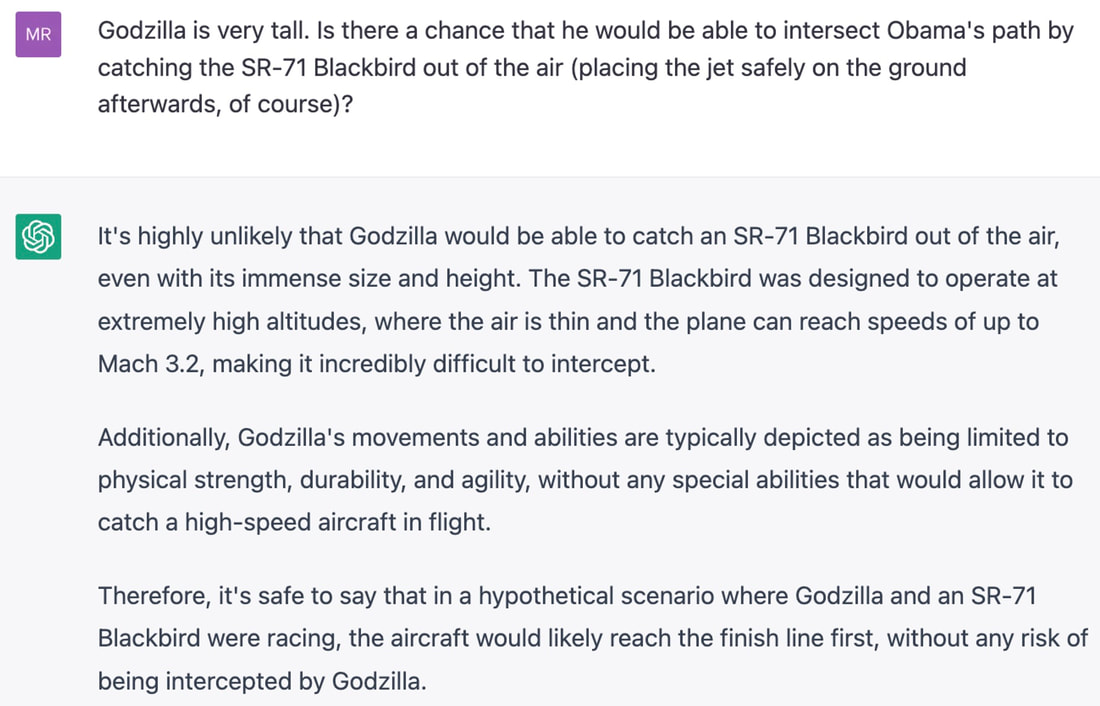

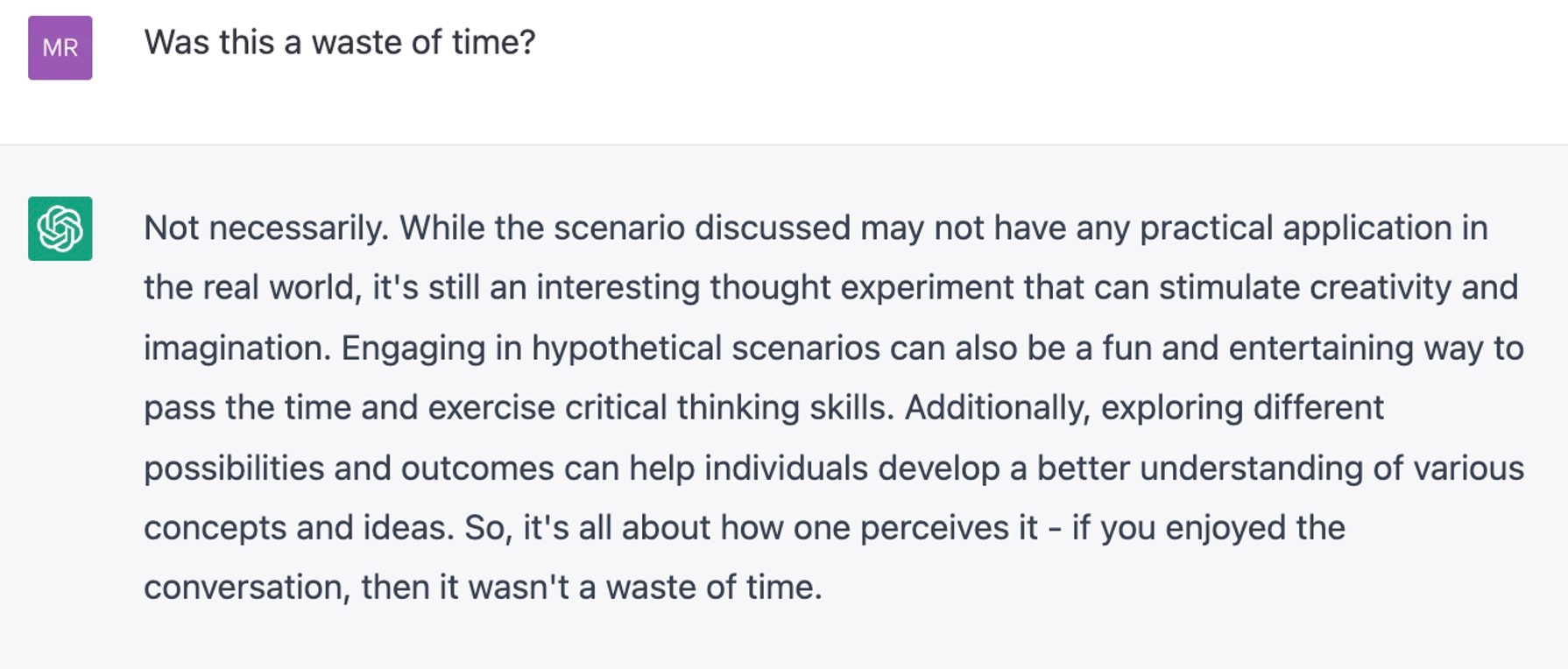

By Avery Hinks For the past few weeks, it has seemed that every time I open Twitter, I see news of a new AI tool. I feel like I’ve been living in The Matrix, only with less cool sunglasses and leather trench coats. Since I’m not Keanu Reeves, this overwhelmed me at first. However, I’ve begun to embrace the reality that is a rapidly evolving landscape of AI tools. As graduate students, we have a diverse list of tasks we must complete daily. If we’re not performing experiments, we’re analyzing data. If we’re not analyzing data, we’re writing. If we’re not writing, we’re procrastinating from writing by making a presentation or marking undergraduate student assignments. If we’re not doing any of these things, we might be wondering what we want to do with our lives and why we are here. Or we’re taking a nap, intentional or not. The above paragraph is an exaggeration, and it’s probably also an exaggeration to say that AI can solve all those problems. What I can say is I’ve found several ways that AI can enhance both my experience and the quality of my work as a graduate student. Here are five examples! 1. Coding Back in December, I wrote this knowledge translation about how to fit data to a curve in Excel. I knew curve-fitting could be done using a coding software such as Python, however, learning how to code seemed daunting, and I didn’t know where to start. Despite being time-consuming, the Excel method was familiar, so I stayed inside my comfort zone. Then, last week, I asked ChatGPT to design me a code to fit force and velocity data to Hill’s force-velocity equation. Within half an hour, I had a functional code. Here’s a video of it in action, using some of my preliminary data from the rat plantar flexor muscles: https://drive.google.com/file/d/1KBH1onWo8F2OKX4RyRLXE_MMYdvAGWux/view?usp=sharing As you can see, compared to the Excel method, I don’t have to spend an excessive amount of time copying and pasting data, and I don’t have to overload an Excel file with numerous curve-fitting graphs. This Python output gives me everything I need with a single click: the a and b coefficients, the maximum force and velocity, the curvature of the relationship, peak power, and the R^2 value. The curve-fitting itself is not the best part about this example, though. The initial code that ChatGPT gave me was functional, but lacking creativity. I originally had to input the Excel file path into the code itself, rather than having a window appear to select the Excel file as shown in the video. The graph also initially didn’t include power or a measurement of the predicted maximum velocity. By going back-and-forth with ChatGPT, and being specific about what I needed, I was able to add these capabilities. In some cases, I was even able to pick up on the coding language and refine the code myself. In the past, I have found that my research area is so specific, it is hard to find any help online for how I can streamline aspects of my data analysis. AI has helped me finally feel confident that I can use code to accomplish some of my very specific tasks! 2. Visually appealing images for presentationsContinuing on the point above about my research being so specific that it’s hard to find help with certain aspects of it online, the same applies for finding images related to my research. This becomes especially annoying when it’s time to put together a PowerPoint presentation for a conference talk. If I want an eye-catching opening slide, I either need to take a good photo of my research myself (and when you’re in the heat of completing an experiment, that’s not always possible) or hope I can find an image online that relates close enough to what I want to show. AI image-generating platforms like Midjourney and Bing have provided the ability to create any photorealistic image I want from scratch. I was shocked at how well this worked. Below are some examples. For my master’s project, I put weighted vests on rats and had them run on a treadmill. Many of the photos I took of the rats with their weighted vests on looked ridiculous. I gave Bing Image Generator the prompt “A white rat wearing a weighted vest” and ended up with something more professional: I do less research in our human lab than I used to, but if I ever present one of our lab’s human studies again, and it includes electromyographic data, I will likely use this on the title slide (“Electromyographic activity recording on computer”): A significant portion of my current research involves measuring sarcomere lengths using a laser diffraction system. With the prompt “Measurement of sarcomere lengths by laser diffraction on a computer,” Bing Image Generator gave me an image that makes laser diffraction look way more interesting than I could ever make it look: Some of my colleagues recently completed a study in which mice performed high-intensity interval training on a treadmill. I wanted to see if Bing Image Generator could make anything good for them (“Lab mouse running on a treadmill”): My colleagues also noted that some of their mice looked a little chunky at the start of the training period, so I adjusted the prompt accordingly (“Fat lab mouse running on a treadmill”): It’s not perfect, though. When I tried “Man seated in a Biodex dynamometer,” the result was horrifying. This would be a case where it’s better to just take a photo myself. Still, I’m excited to see what images AI can help me create for future presentations! 3. Paper summaries One limitation of ChatGPT is that the free version will not read more than 3000 words. This means you could not, for example, feed ChatGPT an entire journal article and ask it to summarize that article. Additionally, if you ask ChatGPT to summarize [enter article title here], it will give you a summary that seems accurate on the surface, but is completely made up. In the above example, several pieces of information provided by ChatGPT are incorrect. For example, the study included 13 participants (not 21) and 8 weeks of training (not 6). Training at a short muscle length also did not alter the history dependence of force at all, contrary to what ChatGPT suggested. (I know this because I wrote that paper). Thankfully, there are other AI tools that are designed specifically for summarizing papers. Similar to above, I used papers I authored to test this out. Casper is a Google Chrome extension that anyone can access. After adding it to your Google Chrome, all you have to do is open the webpage for the article you wish to summarize, then click the Casper icon. After that, Casper gives a general summary of the paper. This summary would not function as a replacement for reading the paper. However, if you’ve already read the paper once or twice, a summary like this would be useful for jogging your memory on the topics covered. An example of when you might need this could be when studying for your comprehensive exams. Casper’s capabilities don’t stop there. After the initial summary is generated, you can scroll down, then click a three-dots icon. Options will appear that prompt Casper to elaborate, summarize more concisely, brainstorm ideas based on the content of the paper, suggest actions, or explain the paper like you’re a 5-year-old. The two that I’ve found most impressive are the “Elaborate” and “Suggest actions” prompts. “Elaborate” may be useful if you need more help jogging your memory on the specific concepts covered in the paper. “Suggest actions” may be useful if you’re trying to think of questions that could be asked based on the paper. These, again, might come in handy in the later stages of preparing for your comprehensive exams. 4. Reducing wordcountsMy first drafts are often too long. This is especially noticeable for abstracts, which usually have word limits of 250 or even 200 words. Recently, I gave ChatGPT the abstract for a literature review I’ve been working on, which was well over 300 words. I told ChatGPT to reduce the abstract to 250 words. ChatGPT did an excellent job, cutting sentences that didn’t need to be there while retaining the main points I wanted to communicate. The final word count was 232 words. I also tried this on my literature review itself, feeding ChatGPT one paragraph at a time. This turned out less successful. It indeed reduced the word count of each paragraph, but often cut pieces of information that I saw as essential to understanding the paper. Ultimately, going through my literature review and carefully considering the wording of each sentence myself took less effort than correcting each output that ChatGPT gave me. There are two lessons here: 1) ChatGPT can help revise shorter pieces but maybe not longer pieces; and 2) always double-check the work that ChatGPT (and AI in general) gives you. I also asked ChatGPT to suggest titles for my literature review based on the content of my abstract. Here’s what it gave me: Not all of these were good fits, but they were pretty close, and helped get my brain moving on coming up with a title myself. ChatGPT is the friend you always wished you had who you can endlessly bounce ideas off of without worrying about wasting their time. 5. Literature searching like you’ve always wantedI don’t know if it’s just me, but I sometimes find myself frustrated by the results I get when performing literature searches on search engines like PubMed or Google Scholar. Don’t get me wrong, I’ve fine-tuned the process for those databases and have gotten great results. By linking together certain keywords with a “____” AND “____” format, PubMed usually helps me find the papers I’m looking for. The problem is, sometimes it doesn’t, or at least it isn’t immediately clear why it gave me a certain paper in the search results. Usually, I have to open the paper, then comb through to see if it has the information I need. This process works, but I’ve always wondered if it could be more streamlined. There is a new AI tool called Consensus. You start by typing in the exact question behind your literature search. Based on that question, Consensus will not only suggest papers, it will highlight snippets from the text of each paper that directly answer your question. No more guessing games! Additionally, if you click “Synthesize,” Consensus will summarize the findings of the suggested papers to give a consensus on your question. It will also provide a Consensus Metre to demonstrate any conflicting findings in the literature. It’s important to note that this should not be a replacement of classic literature search techniques, but rather—like all the AI examples I’ve presented here—a tool to enhance existing practices. Conclusion Almost every day I find a new AI tool that makes me wonder how I can streamline my tasks as a graduate student. There are limitations that we should be cautious about at the moment, but at the same time, with how quickly AI is evolving, many of the limitations I mentioned above could very well be addressed a year from now. My recommendation: try out the ideas I’ve suggested here, explore your own ideas as well, and try to have fun! Bonus: Asking ChatGPT silly questions that will make you laughDuring a one-hour break on a long day of data collection, my colleague and I were too exhausted to do anything productive. Our brain capacity would not extend beyond playing around with ChatGPT, so we asked it silly questions. For example… It quickly got out of hand, but ChatGPT kindly played along.

0 Comments

Your comment will be posted after it is approved.

Leave a Reply. |

AuthorAvery Hinks Archives

September 2023

Categories |

RSS Feed

RSS Feed